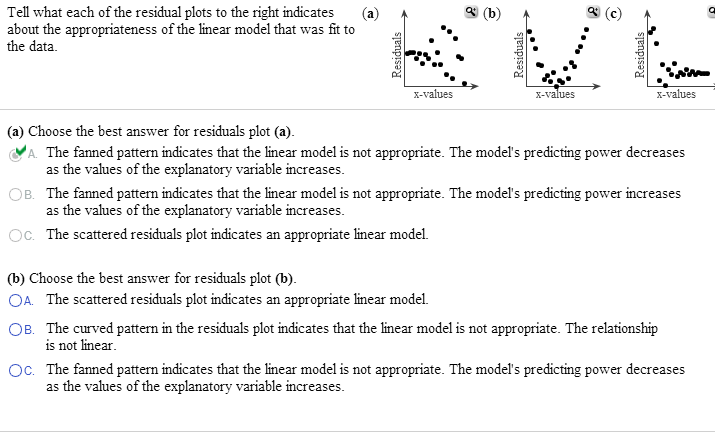

To check if this assumption is met, we can create a residual plot, which is a scatterplot that shows the residuals vs. When this is not the case, the residuals are said to suffer from heteroscedasticity. Check the assumption of homoscedasticity.Īnother key assumption of linear regression is that the residuals have constant variance at every level of x. If the points on the plot roughly form a straight diagonal line, then the normality assumption is met. To check this assumption, we can create a Q-Q plot, which is a type of plot that we can use to determine whether or not the residuals of a model follow a normal distribution. One of the key assumptions of linear regression is that the residuals are normally distributed. The lower the RSS, the better the regression model fits the data. Once we produce a fitted regression line, we can calculate the residuals sum of squares (RSS), which is the sum of all of the squared residuals. In practice, residuals are used for three different reasons in regression: The mean value of the residuals is zero.The sum of all residuals adds up to zero.So, if a dataset has 100 total observations then the model will produce 100 predicted values, which results in 100 total residuals. Each observation in a dataset has a corresponding residual.If we create a scatterplot to visualize the observations along with the fitted regression line, we’ll see that some of the observations lie above the line while some fall below the line: We can repeat this process to find the residual for every single observation: Residual = Observed value – Predicted value = 41 – 35.67 = 5.33 We can then calculate the residual for this observation as: For example, the predicted value of the first observation would be: Using this line, we can calculate the predicted value for each Y value based on the value of X. If we use some statistical software (like R, Excel, Python, Stata, etc.) to fit a linear regression line to this dataset, we’ll find that the line of best fit turns out to be: Suppose we have the following dataset with 12 total observations: Some observations will have positive residuals while others will have negative residuals, but all of the residuals will add up to zero. If we plot the observed values and overlay the fitted regression line, the residuals for each observation would be the vertical distance between the observation and the regression line:Īn observation has a positive residual if its value is greater than the predicted value made by the regression line.Ĭonversely, an observation has a negative residual if its value is less than the predicted value made by the regression line. The difference between the prediction and the observed value is the residual. This line produces a prediction for each observation in the dataset, but it’s unlikely that the prediction made by the regression line will exactly match the observed value. To do this, linear regression finds the line that best “fits” the data, known as the least squares regression line. Recall that the goal of linear regression is to quantify the relationship between one or more predictor variables and a response variable. Residual = Observed value – Predicted value Residuals for the package design example are given below.A residual is the difference between an observed value and a predicted value in regression analysis. Existence of other important (but un-accounted for) explanatory variables: whether residual plots shown a certain pattern.Outliers are identified by residuals with big magnitude.Independence: if measurements are obtained in a time/space sequence, a residual sequence plot can be used to check whether the error terms are serially correlated.Other things that can be examined by residual plots:

Constancy of the error variance is shown by the plot having about the same extent of dispersion of residuals (around zero) across different treatment groups.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed